No code required. Watch Gemini 3 build a voxel art generator, a physics simulator and a “House of Mirrors” ray-tracing demo in minutes. Ai Tools, Ai Fire 101, Ai Startups, Ai Workflows.

TL;DR BOX

Gemini 3 allows users to build functional, complex web applications like 3D simulators and games in minutes using only natural language prompts. It automates coding tasks that previously required specialized frameworks, making software creation accessible to non-programmers.

This guide reviews 12 specific apps (including a voxel art generator and a ray-tracing simulator) demonstrating how “vibe coding” turns text into interactive tools. While impressive for prototyping, the technology currently faces real-world limitations like browser lag and visual artifacts that require iterative refinement.

Key points

-

Stat: Gemini generated a 3D ray-tracing simulator with real-time light reflections and a voxel character in just minutes.

-

Mistake: Expecting flawless production-ready apps immediately; most complex simulations require handling significant browser performance lag.

-

Action: Request “standalone HTML files” in your prompt to ensure the generated app is immediately testable and portable.

Critical insight

Gemini’s true value lies in drastically lowering the barrier to entry for rapid prototyping of complex logic, even if the visual fidelity often requires manual polish for commercial use

Table of Contents

I. Introduction: The “No-Code” Reality Check with Gemini 3

A year ago, if someone claimed you could build a physics engine, a particle simulator or even a custom board game by typing a few sentences… most people would laugh.

But that shortcut is real now.

Let me show you how to build 12 complex apps using nothing but plain-English prompts inside Gemini 3. No coding. No plugins. Just conversation.

These weren’t simple toy demos. We’re talking voxel art tools, physics-based generators and even a golf swing analyzer that compares your form to pros. Some demos were shockingly good. Others showed the real limits of today’s AI builders.

This guide breaks down each example, shows what worked, what didn’t and what these tests mean for anyone who wants to build real applications with Gemini 3, starting today.

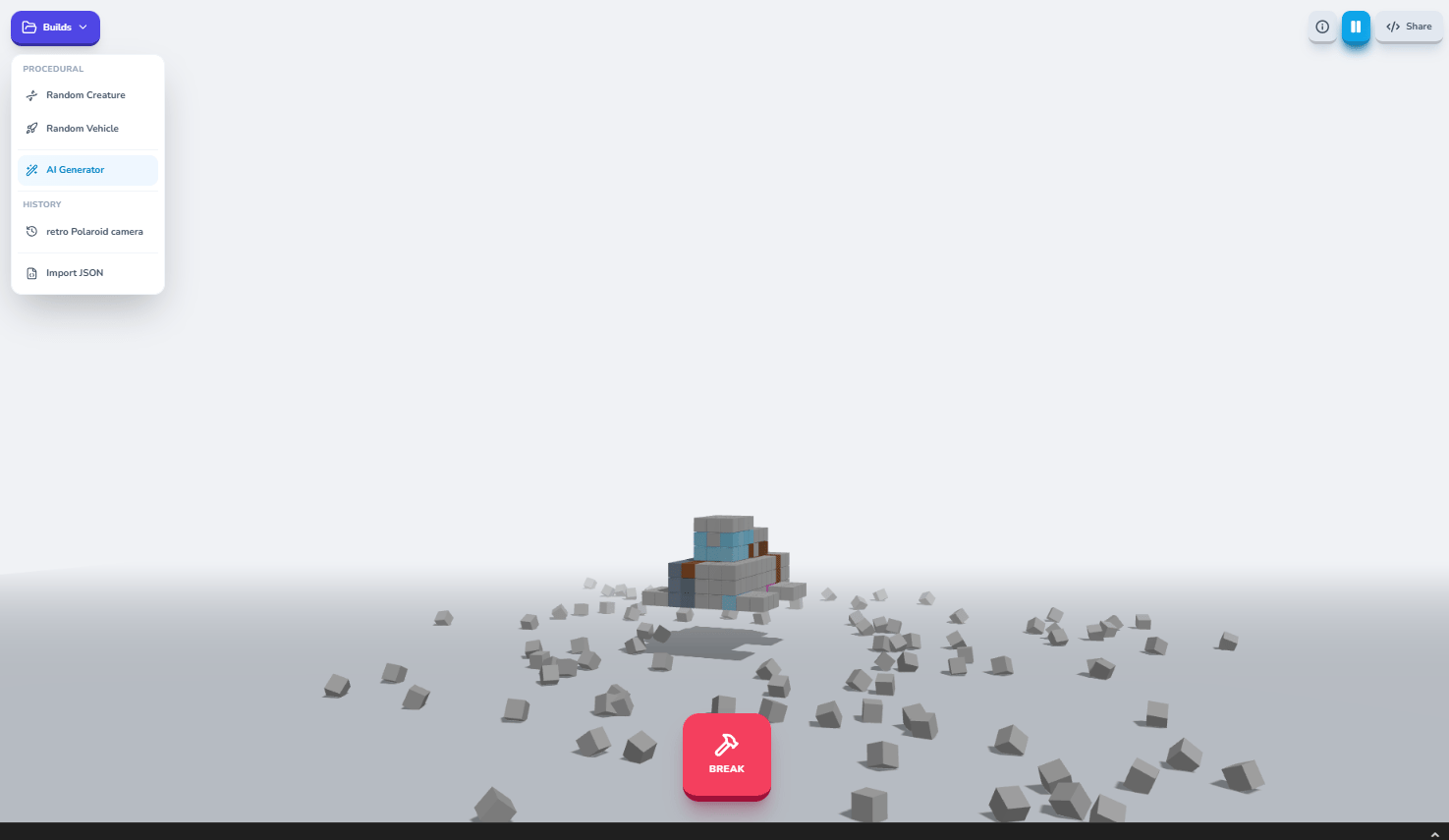

II. The Voxel Creatures Generator: Procedural Art Meets Physics

First, let’s kick things off by taking Google‘s launch demo of a voxel art generator and making it much better through a simple conversation with Gemini 3.

1. The Prompt & The Build

Let’s begin with an app template in Google AI Studio called “Voxel Toy Box”. After that, the prompt was simple:

Can we rebuild this but with procedurally generated voxel creatures and biomechanical vehicles each time the app runs?

After just a few back-and-forth exchanges to refine the concept, Gemini produced a fully functional voxel art generator that creates random creature designs on demand.

2. What Makes It Work

-

Interactive Physics: You can generate a random creature with distinct voxel-based geometry, then “scrap” it to watch it shatter with realistic physics. The pieces tumble and bounce convincingly, complete with shadows on the ground that respond to the lighting. Then you can reassemble those same pieces into an entirely new creature/vehicle design.

-

Specific Generation: Want something specific instead of random? Just tell it what to create. I demonstrated this by requesting a “retro Polaroid camera”. While the colors weren’t quite perfect, Gemini generated a recognizable Polaroid-style camera in voxel form within seconds.

-

Interface Details: It included blueprint import/export functionality via JSON, play/pause controls for the spinning animation and color persistence across generations.

New Creature

Retro Polaroid Camera

3. Why This Matters

This isn’t just a toy; it’s a demonstration of how Gemini 3 can handle complex 3D geometry generation, physics simulation and interactive UI simultaneously. The fact that you can iterate on the design through conversation rather than code makes this accessible to non-programmers who have creative ideas but lack technical implementation skills.

4. The Honest Limitation

Each generation keeps the same color palette from the previous design. To get fresh colors, you need to refresh the entire page. It’s a small quirk but it shows that even impressive AI-generated apps have rough edges that would need refinement for a final product.

Learn How to Make AI Work For You!

Transform your AI skills with the AI Fire Academy Premium Plan – FREE for 14 days! Gain instant access to 500+ AI workflows, advanced tutorials, exclusive case studies and unbeatable discounts. No risks, cancel anytime.

Start Your Free Trial Today >>

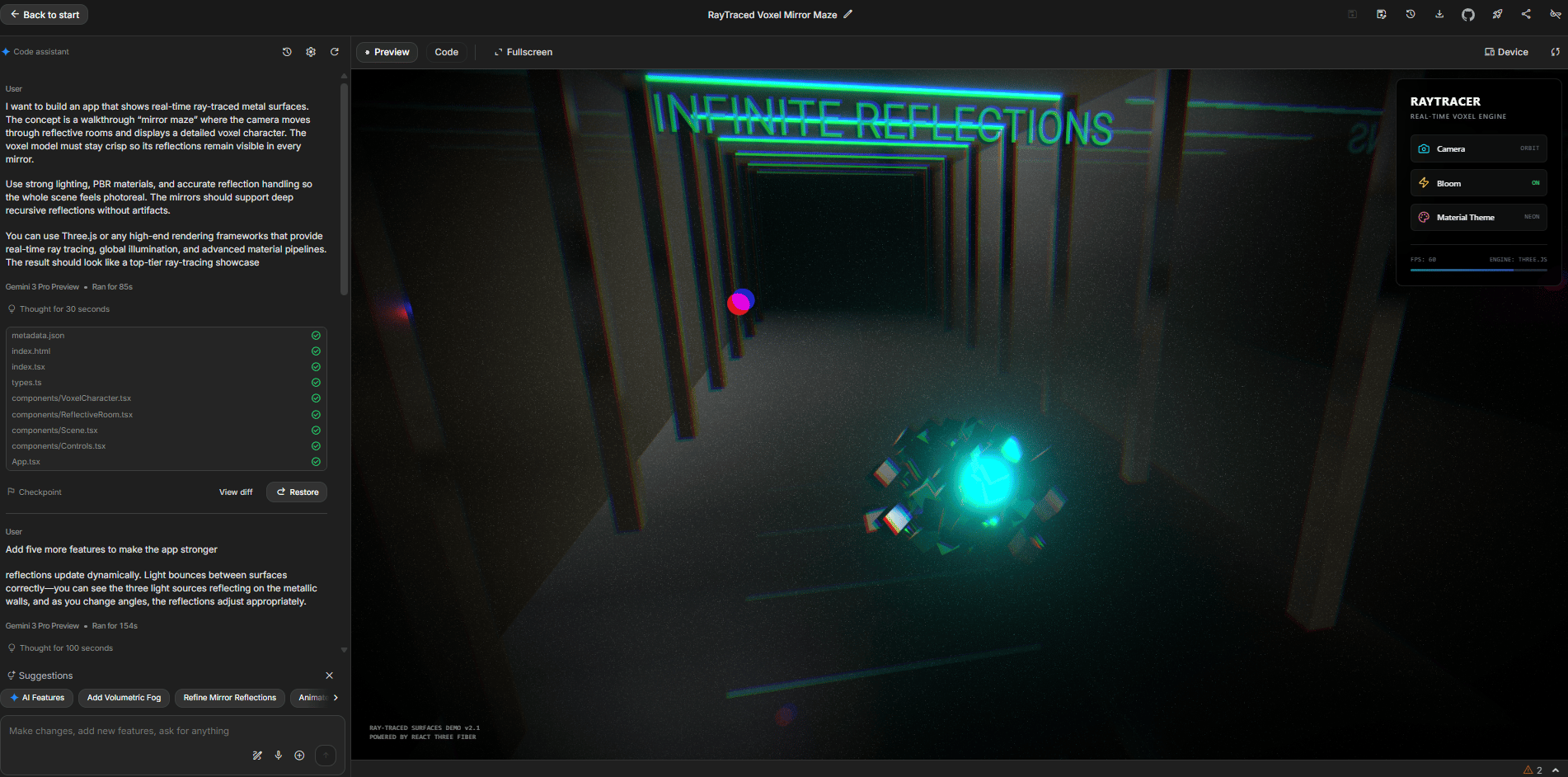

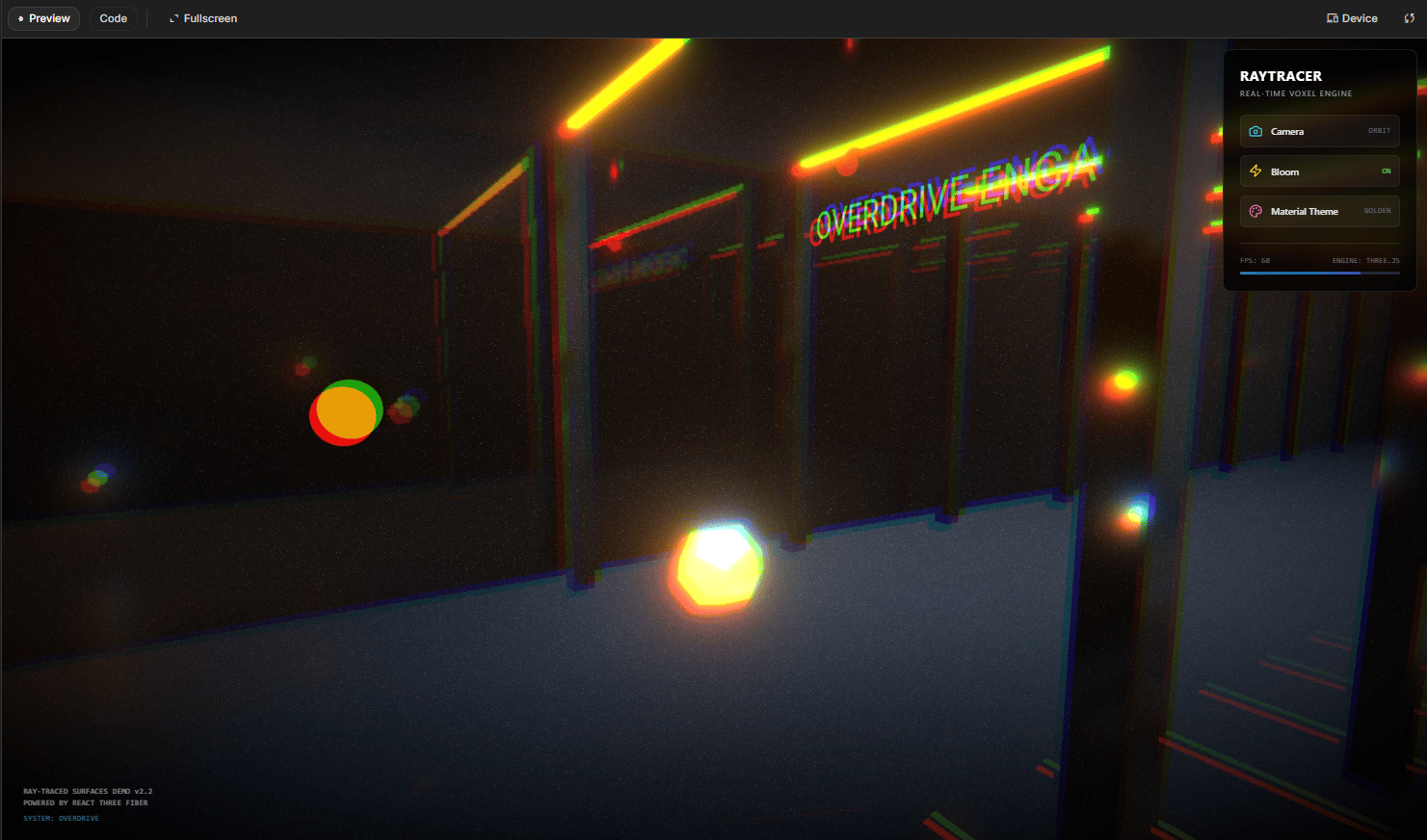

III. How Does The Ray Tracing Simulator Work?

Answer

Gemini builds a mirror maze with reflective metal surfaces and a voxel character. As you move the camera, reflections update in real time with dynamic lighting. It uses web 3D tools to approximate ray tracing inside the browser.

Key takeaways

-

Creates a walkable mirror maze scene.

-

Simulates light bouncing between reflective surfaces.

-

Updates reflections as you move the camera.

-

Still shows artifacts and imperfect alignment.

Critical insight

Even with flaws, this level of rendering from a prompt would have taken weeks by hand.

Ray tracing (the computationally intensive technique that simulates how light bounces through a scene) is notoriously difficult to implement.

My prompt asked Gemini 3 to create “an app that showcases 3D ray tracing of metal textures” with a walkthrough house of mirrors featuring a voxel character.

I want to build an app that shows real-time ray-traced metal surfaces. The concept is a walkthrough “mirror maze” where the camera moves through reflective rooms and displays a detailed voxel character. The voxel model must stay crisp so its reflections remain visible in every mirror.

Use strong lighting, PBR materials and accurate reflection handling so the whole scene feels photoreal. The mirrors should support deep recursive reflections without artifacts.

You can use Three.js or any high-end rendering frameworks that provide real-time ray tracing, global illumination and advanced material pipelines. The result should look like a top-tier ray-tracing showcase.1. What Gemini 3 Delivered

It built a fully navigable 3D environment where you can move the camera through reflective surfaces. The demo includes floating metallic pieces that reflect light convincingly, a voxel character in the center and walls that show actual ray-traced reflections of the scene.

2. The Technical Achievement

As you move around the environment, reflections update dynamically. Light bounces between surfaces correctly, you can see the three light sources reflecting on the metallic walls and as you change angles, the reflections adjust appropriately.

3. The Honest Assessment

There are visible artifacts. Some reflections don’t perfectly align and the rendering isn’t flawless. But for something generated through conversational prompts in minutes rather than weeks of manual coding, the quality is remarkable.

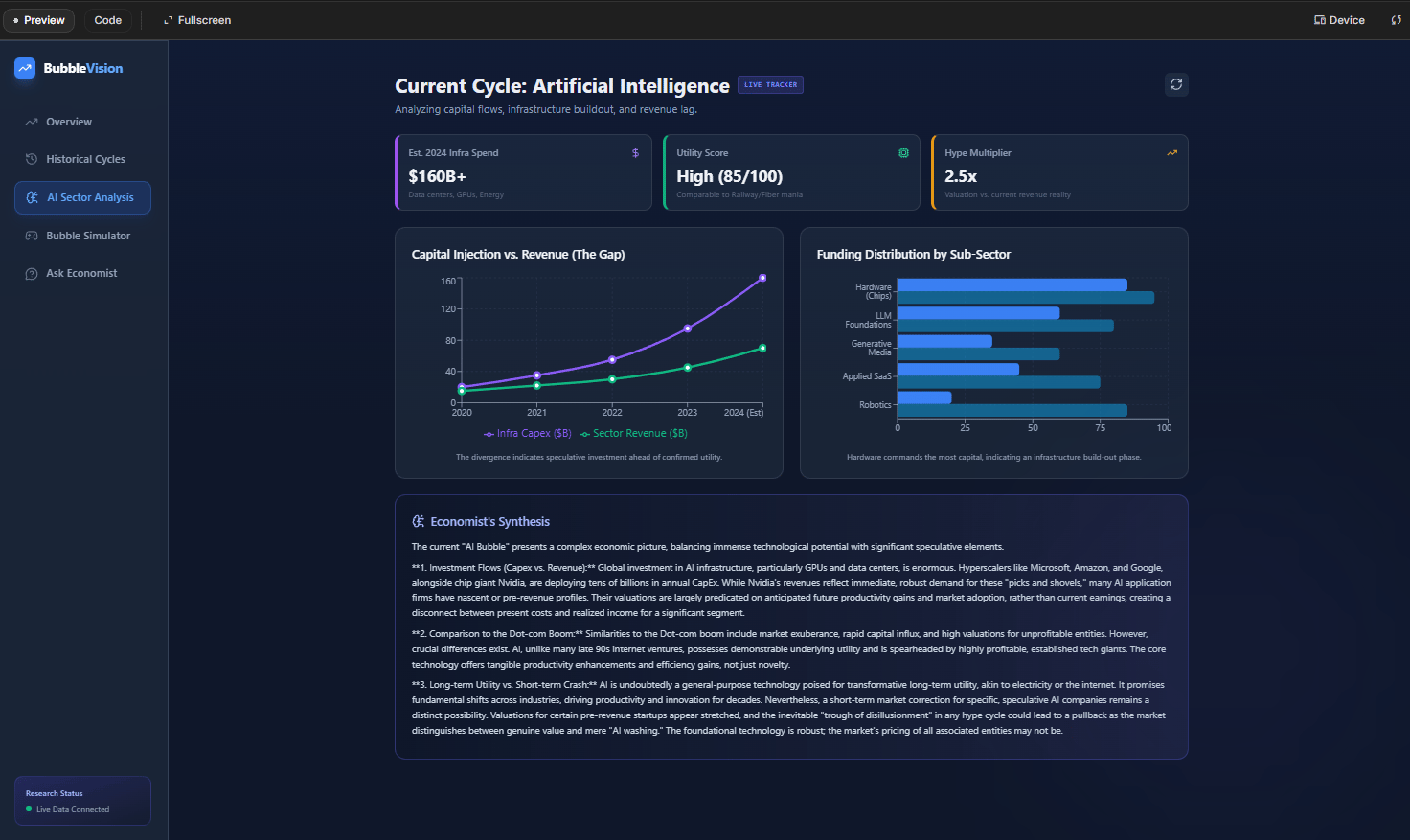

IV. What Is The AI Bubble Research Storyboard?

Answer

This app turns macroeconomic research into an interactive dashboard and game. It combines historical bubble patterns, current AI spending data and a simulator where users make business decisions over time. Charts and stories update as choices change, risk and hype levels.

Key takeaways

-

Mixes data, history and visualization in one tool.

-

Includes a bubble “game” with branching outcomes.

-

Shows framing like “productive bubble” vs “destructive bubble”.

-

Uses AI to draft both analysis and interface.

Critical insight

It proves AI can move from research notes to working economic teaching tools quickly.

This demo takes a completely different direction; instead of games or visual effects, I asked Gemini 3 to create an educational tool analyzing AI economic bubbles.

1. The Ambitious Prompt

You are a macro economist preparing to educate the public. We need to conduct deep research on financial bubbles across history. Focus on how speculative bubbles differ from bubbles that left lasting infrastructure. Determine what causes long-term harm versus realignment that produces more value than the bubble destroyed. Use examples like the dot-com fiber buildout versus the Great Depression.

Gather data on the current AI bubble, including investment flows, funding rounds, sector-level allocations and company-specific capital trends. Build a research foundation for a public-facing dashboard that is animated, aesthetically clean and easy for non-experts to understand.

Please compile all information, datasets, frameworks and analytical methods needed before we start software development.2. What Gemini Created

It built an interactive macroeconomic analysis tool that includes:

-

Current AI Market Data: Capex projections ($405 billion in 2025, up 42%).

-

Historical Framework: Dot-com Bubble.

-

Minsky Cycle Visualizations: Showing progression from hedge to Ponzi units.

-

An Interactive Bubble Simulator Game.

3. The Bubble Simulator Game

This is where it gets creative. You can play through scenarios, making business decisions: build infrastructure, raise funding, hire employees or acquire companies.

As you make decisions, the chart updates in real-time, showing hype levels, risk assessments and valuation caps. Push too hard too fast and you hit 100% bubble risk, triggering “demo day bubble popped”.

4. The Strategic Insight

The tool concludes with AI’s own assessment: “Productive bubble, infrastructure survives, speculators die, banks safe, stocks at risk”. This represents Gemini analyzing the data it gathered and providing a nuanced take on current AI market conditions.

V. Image to Voxel Assets: 2D to 3D Conversion

Converting flat 2D images into 3D voxel art isn’t trivial. It requires understanding which elements of an image to extract, how to represent depth and what details to emphasize or simplify.

1. How It Works

Let’s begin with a template app in Gemini Gallery called “Image to Voxel Art”. Then I upgraded it with this prompt:

I have provided an image. You are an expert 3D Voxel Artist for game environments.

Your job is to produce 3 high-resolution voxel scene builds inspired by this object, representing environmental states or action phases.

**THE 3 SCENE TYPES:**

1. Idle Zone (Setup): A static environment block - like a workstation, summon circle, forge or control panel - based on the theme of the provided image.

2. Active Zone (Mid-Event): The environment alive with effects - energy pulses, mechanical motion, spell activation or moving machinery.

3. Cooldown Zone (Aftermath): Lights dim, particles fading, components settling or residual glow.

**OUTPUT FORMAT:**

Output 3 complete HTML files separated with:

<!-- NEXT_POSE_FILE -->

**HTML REQUIREMENTS:**

- Full standalone Three.js ES-module HTML files

- Import map (unpkg, Three.js 0.160.0)

- Include GLTFExporter

- UI button “Download GLB Asset” (top-right)

- Must export the full voxel mesh group

- Use small voxels + InstancedMesh

- Clean neutral background

- Camera centered on the scene

I uploaded an image (a famous Michael Jackson photograph) and Gemini 3 analyzed it to generate three distinct voxel asset variations. The process takes about 30 seconds, during which Gemini 3 does the following:

-

Analyzes the 2D image to understand composition.

-

Identifies key subjects and elements.

-

Determines appropriate voxel representation.

-

Generates three different scene types (setup, mid-event and aftermath).

-

Produces downloadable files for each variation.

2. The Quality Assessment

I describe the Michael Jackson voxel results as “simplistic but actually really cool.” You can clearly recognize the subject and the voxel interpretation captures the essence of the original image, even if it’s not photorealistic.

VI. Particle Collider Simulator: Physics Made Visual

Explaining what happens inside a large particle collider or a tokamak fusion reactor is challenging. My prompt asked Gemini to build “a super aesthetically pleasing simulator for a large particle collider, complete with real physics sims”.

I want to build an educational visualization of a particle collider with real physics simulations. Show how particles accelerate, interact and collide, supported by glowing particles, energy bursts and motion paths. Add optional plasma simulations like those used in tokamaks to explain fusion concepts. Make the whole experience beautiful, smooth and grounded in physics.1. What Was Built

It created two separate simulators, one for a tokamak (fusion reactor) and one for a particle collider. Both feature visual representations of particle movement and interactions.

Tokamak

Particle Collider

2. The Performance Reality

I’ll say the simulation “runs very slowly in my browser but that’s because it’s doing a lot of calculation”. This is an honest limitation, accurate physics simulation is computationally expensive. The fact that it runs at all is impressive but it’s not going to be smooth or fast without significant optimization.

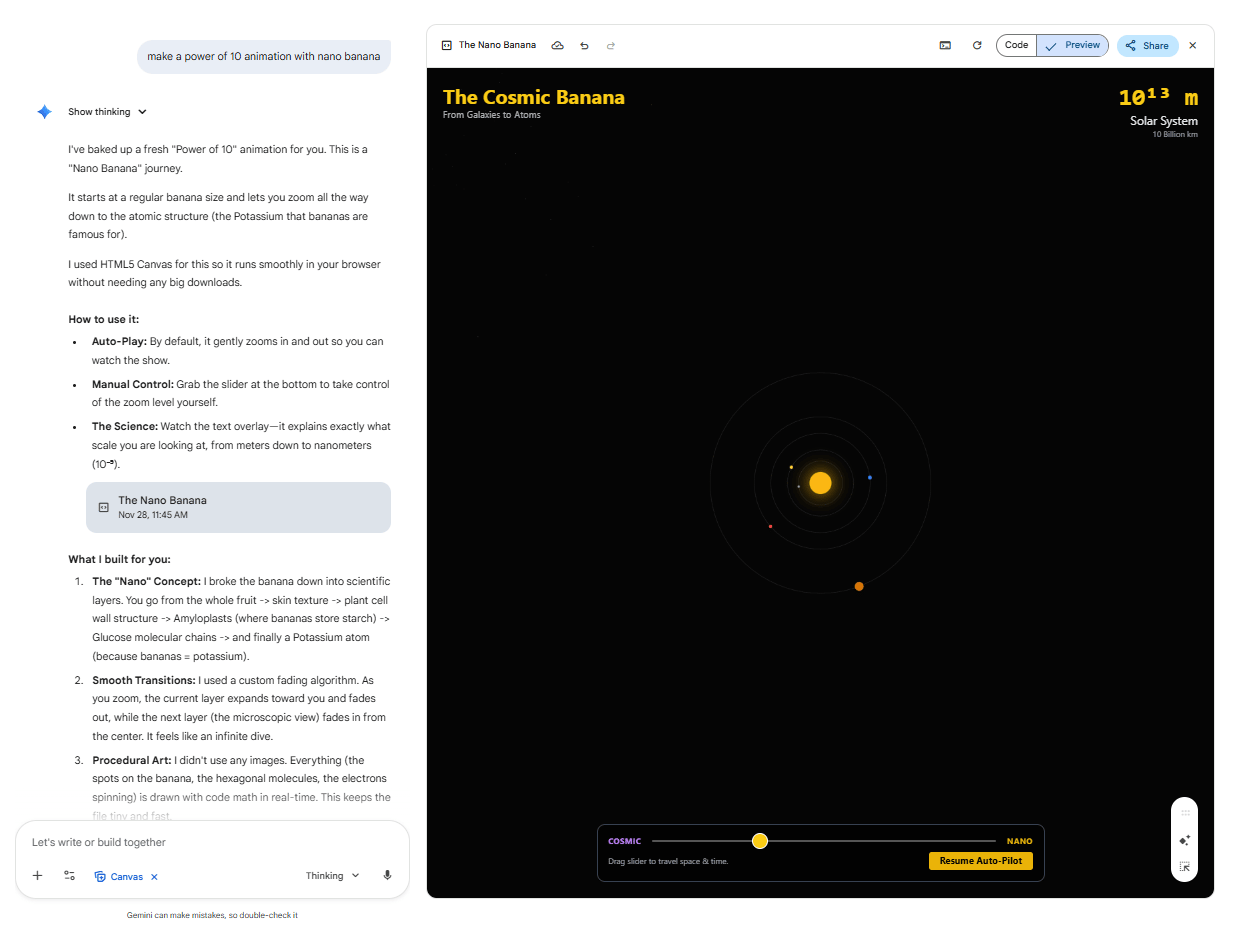

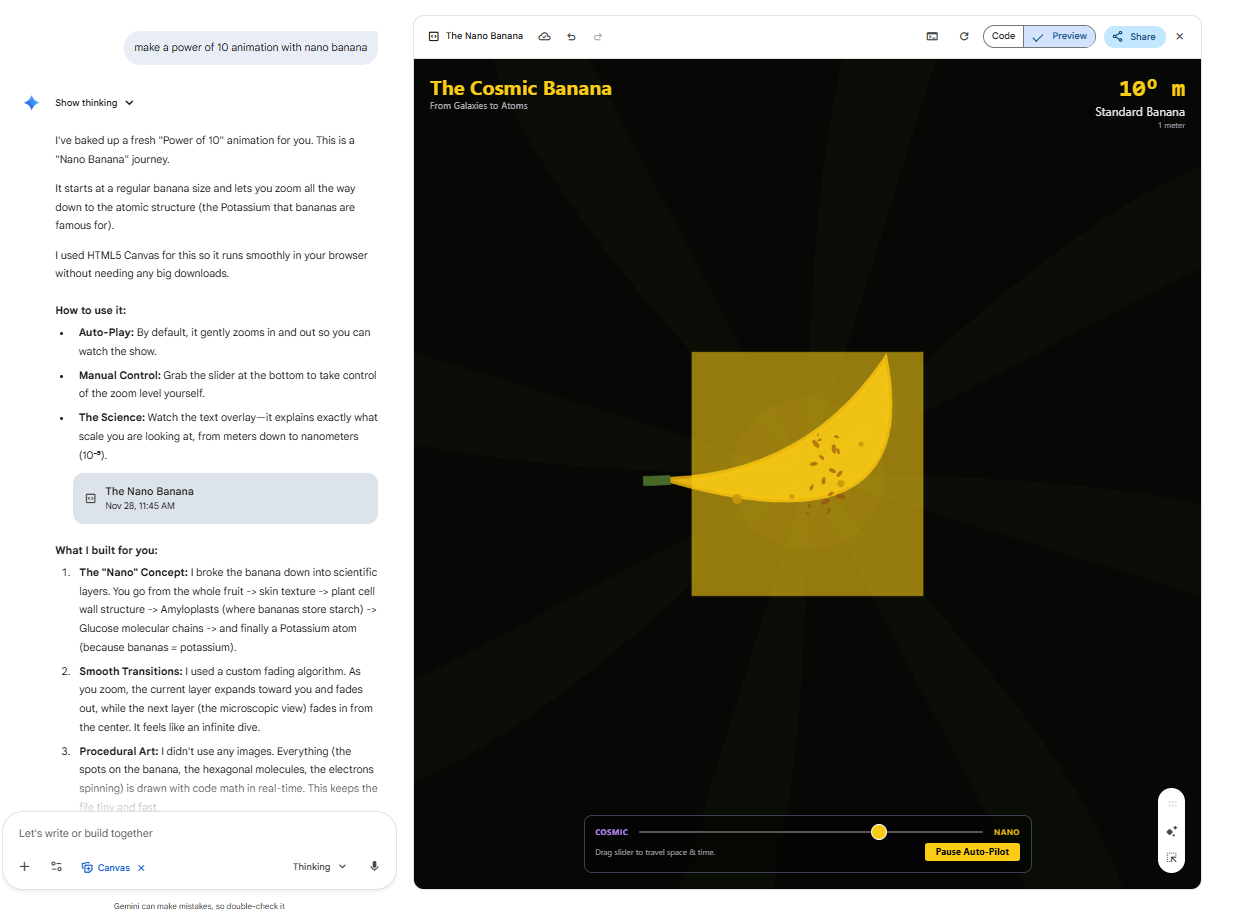

VII. Power of 10 Animator: Scaling From Cosmic to Atomic

Inspired by the famous “Powers of Ten” documentary, I asked Gemini to create an animation that zooms from cosmic scales down to atomic scales, generating appropriate imagery at each level.

1. The Technical Challenge

This isn’t just about creating images; it’s about creating appropriate images for each scale level and transitioning smoothly between them. Gemini needs to understand what should be visible at the solar system scale, versus the cellular scale, versus the atomic scale.

2. The Implementation Approach

Gemini is prompting Nano Banana (an image generation model) to create multiple images at different scale levels, then seamlessly blending them together across the timeline. This represents AI coordinating with other AI tools to achieve a complex result.

3. The Honest Quality Check

I repeatedly note “not exactly perfect” and point out odd elements like an unexplained hand at the cellular level. The transitions work but the content accuracy varies. Still, as he says, it’s “fun to play around with” and demonstrates a creative application of AI image generation beyond single static images.

Creating quality AI content takes serious research time ☕️ Your coffee fund helps me read whitepapers, test new tools and interview experts so you get the real story. Skip the fluff – get insights that help you understand what’s actually happening in AI. Support quality over quantity here!

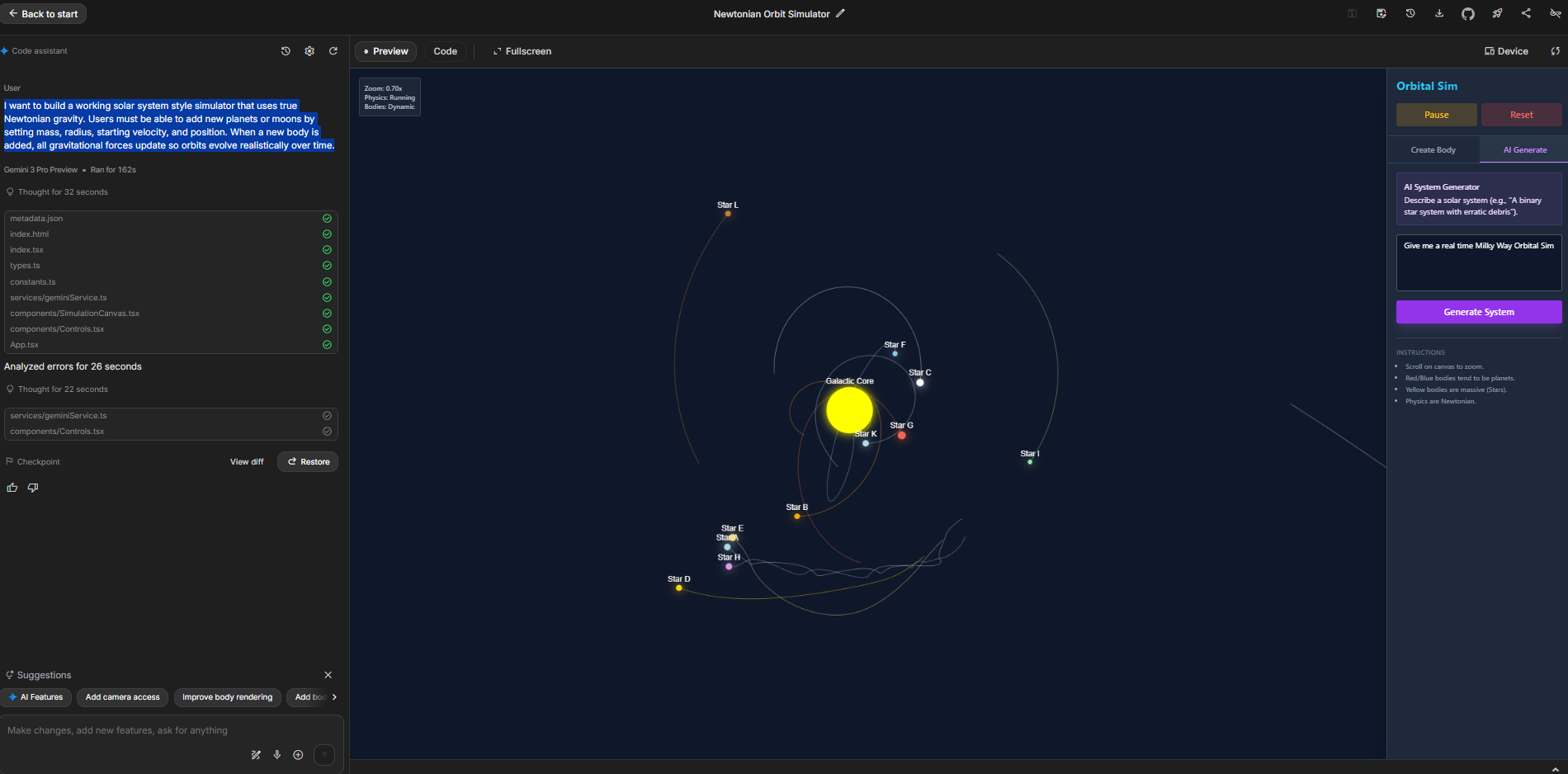

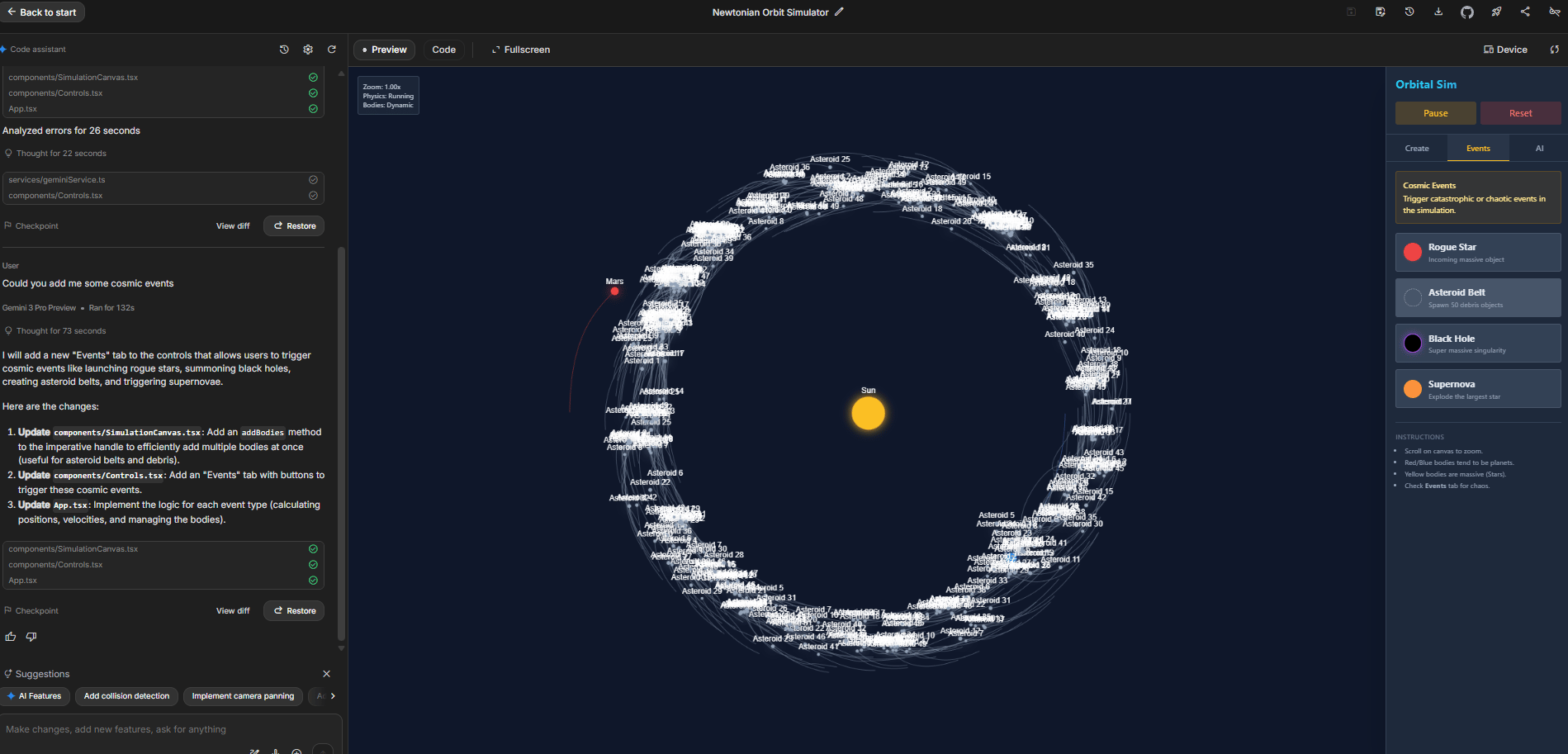

VIII. Interactive N-Body Gravity Simulator

Newtonian gravity might be centuries-old physics but simulating it accurately with multiple bodies remains computationally challenging.

My prompt asked for “a fully working physics accurate simulation of a solar system style environment where objects follow Newtonian gravity”.

I want to build a working solar system style simulator that uses true Newtonian gravity. Users must be able to add new planets or moons by setting mass, radius, starting velocity and position. When a new body is added, all gravitational forces update so orbits evolve realistically over time.1. What You Can Do

Click and drag to add celestial bodies with different properties. As you add objects, the simulation immediately recalculates all gravitational forces and adjusts every object’s trajectory accordingly.

2. Emergent Behaviors

You see collisions and merging when objects get too close, gravitational slingshot effects as objects pass near massive bodies and orbital decay.

3. Special Objects Add-on

I asked Gemini to give me some cosmic events, so it gave me these:

-

Rogue Stars: Massive objects passing through the system.

-

Black Holes: Extreme gravity that pulls in everything nearby.

-

Super Nova: Explode the largest star.

-

Asteroid Belt: Spawn 50 debris objects.

The educational value here is massive. You can see how adding mass affects orbits and how gravitational systems evolve over time.

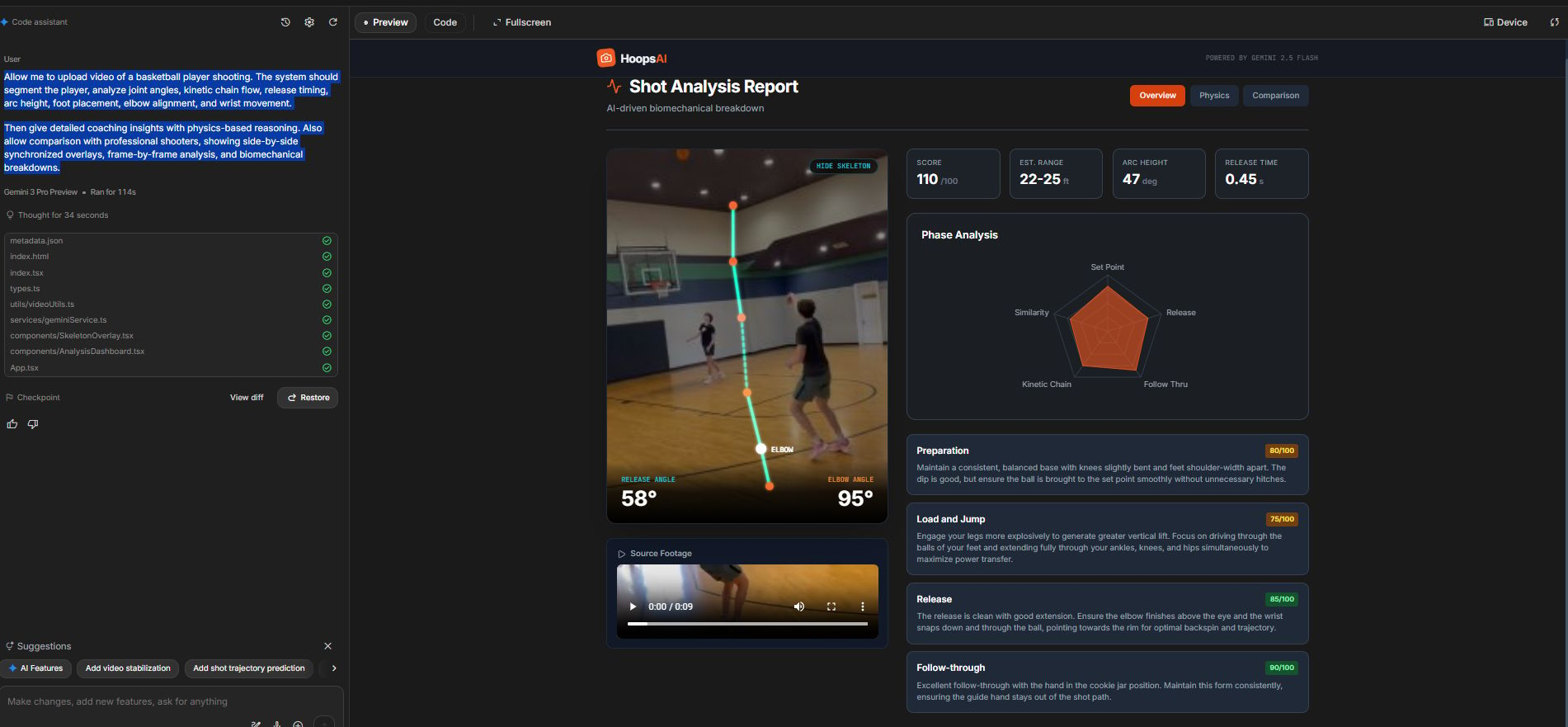

IX. Basketball Shot Analyzer: AI-Powered Shooting Coach

Traditional shooting coaching takes years of practice, trained eyes and slow-motion replay. Here, Gemini built a full shooting analysis system from a single prompt.

1. The Workflow

Upload a short clip of a player taking a jump shot. The system breaks the video down frame-by-frame and delivers:

-

Shot Score: A clear rating of the shooting form (e.g., 110/100 in the demo).

-

Biomechanics Breakdown: Joint angles at release, elbow position, wrist snap, foot placement and overall kinetic chain flow.

-

Arc + Timing Analysis: Estimated arc height, release speed and projected shooting range.

-

Phase-by-Phase Coaching: Preparation, load/jump, release and follow-through; each scored with actionable feedback (“80/100 for preparation,” “85/100 for release,” etc.).

-

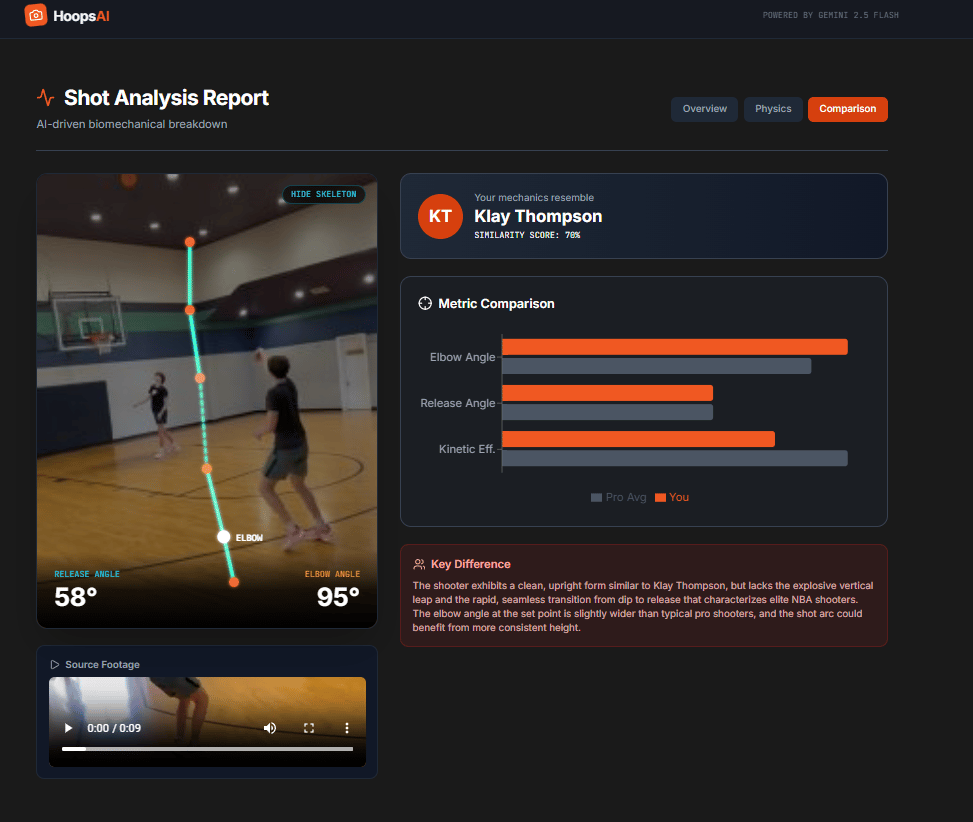

Pro Comparison Mode: Side-by-side syncing with professional shooters, including skeleton overlays and frame-by-frame matching for real-time visual comparison.

2. The Technical Advantage

Gemini 3 processes the video one frame at a time, tracking body segments, alignment and motion physics throughout the shot. This granular, biomechanics-level breakdown is what makes the analysis feel like a real professional coach, except it happens in seconds.

It’s not just “spotting mistakes.” It’s analyzing the entire kinetic chain, identifying where power leaks, timing issues or alignment problems occur and explaining the why behind each correction.

X. What Is The Hand Tracking Fluid Simulator?

Answer

This app uses your webcam to track one or two hands. As you move, fluid-like effects and particles flow around your hands on screen. It blends computer vision with simple physics-based visuals.

Key takeaways

-

Uses hand tracking for input, not a mouse

-

Renders fluid or particle fields in response

-

Runs in real time in a browser

-

Useful for art, demos or gesture experiments

Critical insight

It points toward interfaces where your body becomes the main controller for visuals.

Sometimes the most impressive demos are the simplest in concept. This simulator tracks your hands via webcam and creates fluid dynamic effects that respond to your movement in real-time.

1. How It Works

Hold up one hand and the system tracks it. Add a second hand and it tracks both. Move them around and visual effects follow your hands – particles flowing, energy fields responding, dynamic interactions occurring.

2. The Appeal

This is pure fun and experimentation. But that playfulness demonstrates an important capability: real-time computer vision integration with generative effects. This technology could be applied to gesture-based interfaces, interactive art or accessibility tools.

XI. Earth Simulator With Weather Control

My prompt for this one was comprehensive: “Make a perfect 3D representation of Earth on the globe with a layer above it for atmosphere that looks like clouds that interact perfectly like clouds”.

I want to build a high quality 3D globe of Earth with a separate atmospheric layer that renders clouds in a realistic way. Use Three.js and any supporting libraries needed to make the Earth and cloud layer look as close to reality as possible.

Add sliders that control weather variables like wind, temperature and moisture. Include an AI-powered prompt window where users can type natural language prompts such as “trigger a Category 5 hurricane” or “simulate a mega drought,” and the system updates the visual state.

Place a timeline along the bottom that can speed up time, with toggles to change playback speed. Use it to show how climate and weather evolve over the next 100 years. Add toggles to show and hide both a surface temperature layer and a precipitation layer, each animated over time.

Add a Moon: Orbiting the Earth using a realistic moon texture.

Add Satellites: A constellation of satellites orbiting at different speeds and angles.

Base everything on real climate and weather data where possible but keep visual quality and aesthetics as the top priority.1. What Was Delivered

A rotatable 3D Earth with realistic cloud layers. The moon is visible in the background and you can adjust multiple environmental parameters like sun position, CO2 levels, cloud density and temperature.

2. The Satellite Feature

This is particularly impressive satellite paths are calculated and displayed correctly by Gemini 3. You can see communication satellites, weather satellites and other orbital objects moving in their actual trajectories around Earth.

XII. Dot Matrix Image Converter: Creative Deconstruction

This demo is visually stunning. I upload a street photograph and Gemini converts it into a manipulable dot-matrix representation.

1. What You Can Do

Play the timeline to watch the image literally come apart; each pixel becomes an independent dot that can move in 3D space. The dots float, swirl and eventually reassemble into the original image.

-

Available Effects: Pull effect, data glitch, event horizon, quantum, helix, implosion.

The Original Image

3D Scenes of the image

2. The Technical Achievement

All calculations happen in the browser in real time. Thousands of individual dots are moving according to different physics or animation rules, all while maintaining the ability to reconstruct into the original image.

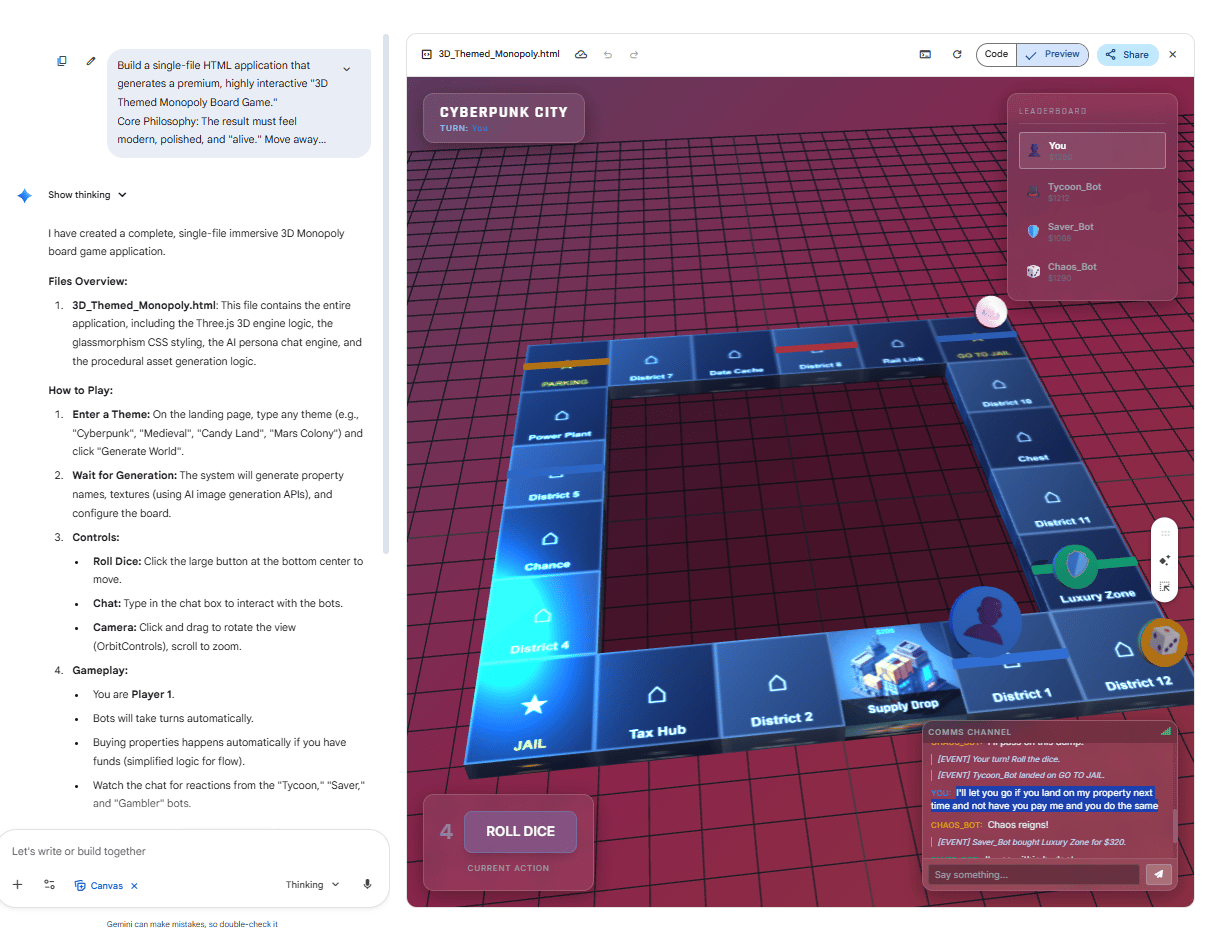

XIII. Custom Monopoly Board Generator: Themed Gameplay

The final demo combines game logic, visual design, AI opponents and natural language interaction into a fully playable Monopoly game with a customizable theme.

1. The Concept

Describe a theme (I use Cyberpunk) and Gemini generates a complete Monopoly board with themed properties, appropriate pricing, custom game pieces and playable rules.

-

Themed Elements: Cyberpunk City-themed property names, custom game pieces and appropriate color schemes.

-

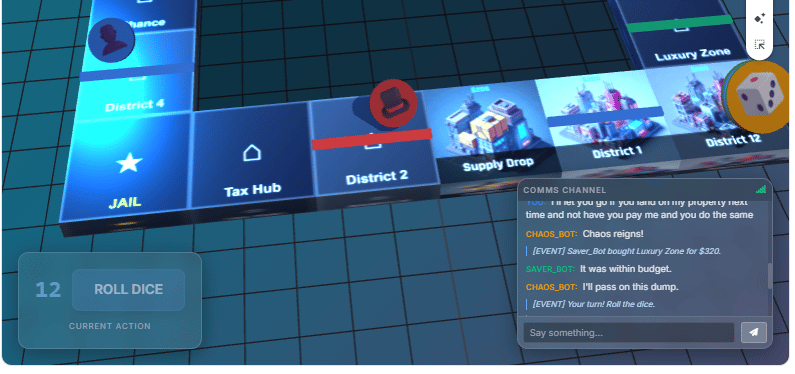

Game Functionality: Dice rolling, property purchasing, AI opponents, trading system and negotiation through chat.

2. The AI Opponent Feature

This is particularly clever. You can chat with AI players to negotiate deals: “I’ll let you go if you land on my property next time and not have you pay me and you do the same”. The AI opponents understand and respond to strategic negotiation.

3. The Honest Limitation

I’ll say it’s not always perfect but emphasize that if you continued working on this, if you continued building it, it could be really cool.

The foundation is there; it’s a playable game with themed elements but it would need refinement for production quality.

XIV. Conclusion: Accessibility Is the Revolution

The impressive part of my showcase isn’t any single demo; it’s the totality: 12 diverse, functional applications created through conversation with Gemini 3 in this post. Each one represents something that would have required significant development time and specialized expertise just months ago.

The real question isn’t “Can AI build perfect applications?” It’s “Can AI lower the barrier to building useful things enough that significantly more people can create software?” Based on what I demonstrate, the answer is clearly yes.

The tools are available now. The capabilities exist today. What will you build with Gemini 3?

If you are interested in other topics and how AI is transforming different aspects of our lives or even in making money using AI with more detailed, step-by-step guidance, you can find our other articles here:

-

4 Smart Strategies To Build An Entire AI Marketing Team

*indicates a premium content, if any

Leave a Reply