You think you’re getting options. You’re not. AI repeats the same idea in disguise. Here’s the simple fix most people miss. Once you see it, you can’t unsee it.. .

TL;DR BOX

In 2026, the primary failure in AI-generated options is the “Gravity Problem”, the model’s tendency to provide three variations of the same safe answer. Most users unknowingly settle for “rearranged copies” that lack structural or logical diversity. To solve this, you must transition from Variation Prompting (changing words) to Divergent Prompting (forcing structural conflict).

The most advanced users rely on MECE to remove overlap, Persona Rotation to force conflicting viewpoints and Dimension Locking to test one variable at a time. For high-stakes decisions, the current gold standard is deploying Sub-Agents (isolated AI instances) to prevent “contextual pull” between options, ensuring that Option B isn’t quietly influenced by the existence of Option A.

Key points

-

Fact: Claude Code now supports subagents with separate context windows, which makes it one of the clearest real-world examples of siloed AI analysis today. Claude Cowork also pushes in a similar direction for desktop task workflows.

-

Mistake: Assuming three different “tones” (e.g., professional, witty, urgent) create three different “strategies”. Tone is a cosmetic layer; strategy requires a change in underlying logic.

-

Action: Run the Verification Test (Section VI) on your next multi-option generation. Force the AI to explain why its options are fundamentally different; if it can’t, reject the output.

Critical insight

The real “unfair advantage” in 2026 is Siloed Analysis. Truly independent thought in AI is only possible when the instances generating the options cannot see each other’s work.

Table of Contents

I. Introduction

You open ChatGPT and ask for 3 different ways to start a cold email. It gives you 3 different options: one begins with a question, another with a compliment and the third with a statistic.

You read through them, pick the one that feels right and move on, thinking you made a smart choice.

But actually, you didn’t.

What you actually got was the same answer, rewritten 3 times. The logic and structure didn’t change and even the tone barely shifted. Only the wording did.

This happens more often than people realize. It affects more than just email writing. Instead of getting new thinking, you get the same idea repeated with small changes.

Understanding why this happens is the first step to fixing it. And once you fix it, the quality of everything you produce with AI jumps fast.

II. Why AI Always Defaults to One Answer

AI usually finds one answer it believes is the safest and strongest. Then it creates nearby versions of that answer to fill the number of options you asked for. Researchers often describe this as a gravity problem. Everything gets pulled toward the same center.

Key takeaways

-

The model predicts the most likely useful answer first.

-

Extra versions often orbit the same core logic.

-

Similarity feels safer to the model than large divergence.

-

Fixing this requires changing the request structure, not just wording.

When you ask AI to give you multiple options, here’s what’s actually happening behind the scenes:

-

First, the model identifies the single best answer to your question.

-

Then it generates variations of that answer to fill out the number of options you asked for.

-

Then it presents those variations as if they’re different.

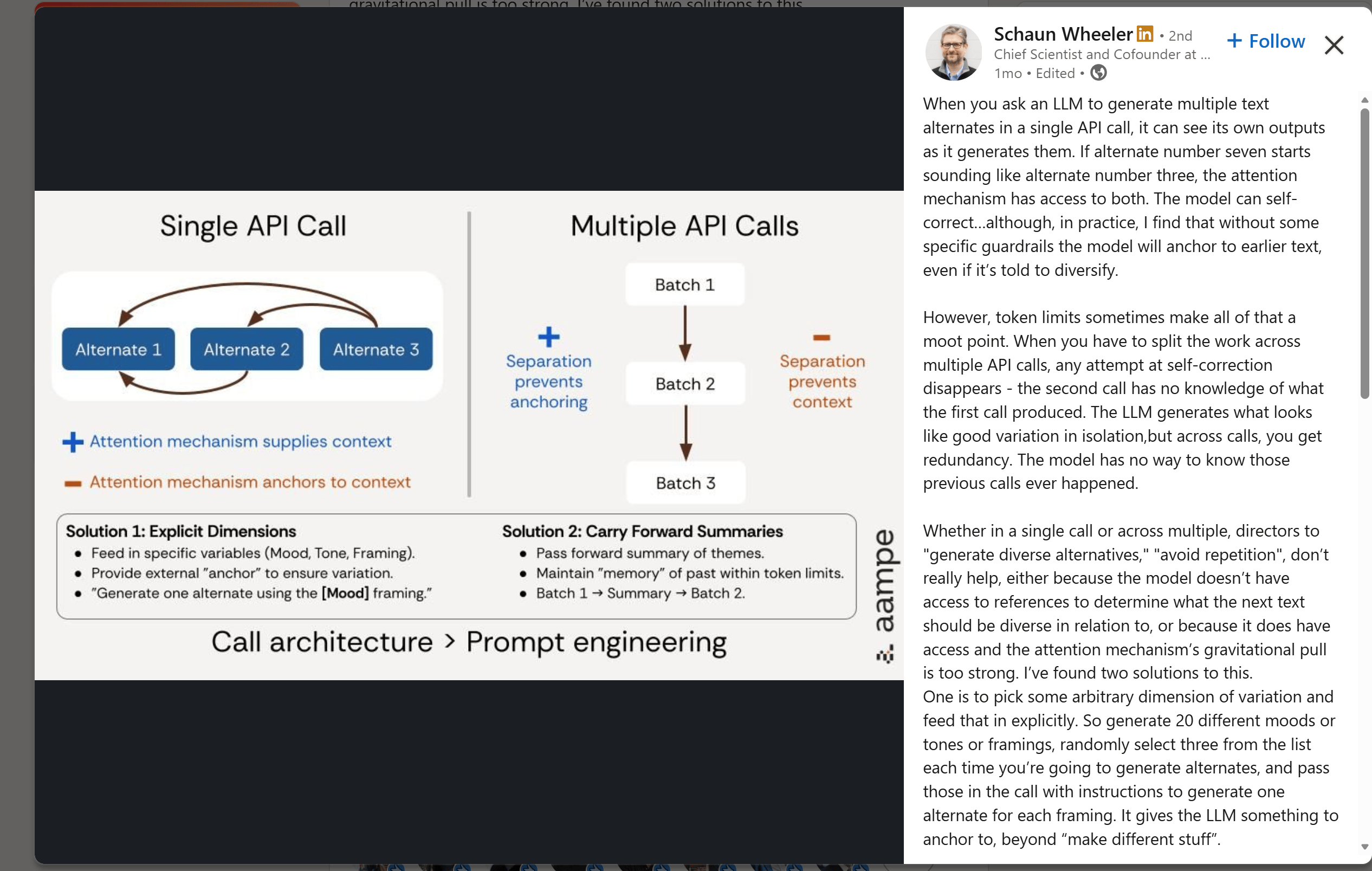

AI researchers call this the “gravity problem“. Every option orbits the same central answer, pulled in by the same gravitational force. The wording changes but the structure, logic and perspective stay the same.

Source: LinkedIn.

Here’s a real example. You ask for 2 different follow-up email openers after a sales call:

-

Version 1: “It was great connecting with you today. I wanted to follow up on a few things we discussed”.

-

Version 2: “Thanks for taking the time to meet earlier. I wanted to circle back on some key points from our conversation”.

Sound different, right? Now, read them twice and you’ll see exactly what happened: both open with a polite acknowledgment, both use a soft transition, both set up the same kind of body.

The words are different but the emails are fundamentally the same.

Learn How to Make AI Work For You!

Transform your AI skills with the AI Fire Academy Premium Plan – FREE for 14 days! Gain instant access to 500+ AI workflows, advanced tutorials, exclusive case studies and unbeatable discounts. No risks, cancel anytime.

Start Your Free Trial Today >>

III. Method 1: MECE Constraint

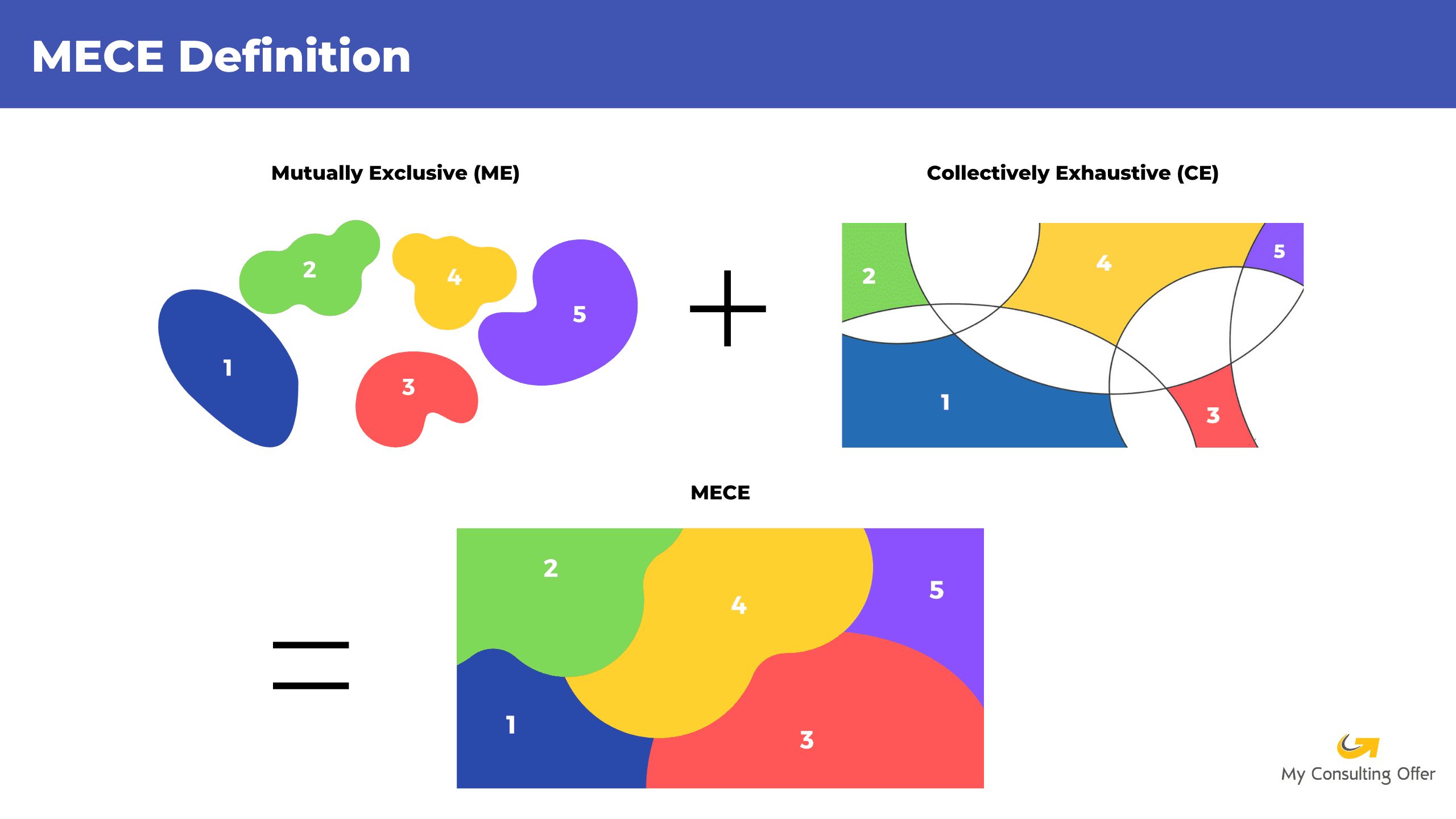

MECE stands for Mutually Exclusive, Collectively Exhaustive. It’s a framework that came out of McKinsey and the management consulting world originally used to structure problems and presentations without overlap or gaps.

-

Mutually exclusive means each option is clearly different, with no shared logic, shared structure or repeated ideas.

-

Collectively exhaustive means all options together cover the full solution space, so nothing important is missing.

When you include this phrase in a prompt, AI recognizes it as a hard structural constraint, not a stylistic preference and actively works to produce non-overlapping outputs.

1. Why It Works

Most prompts only tell the AI what to produce but they don’t say how different the outputs should be. That’s why outputs often feel repetitive.

MECE fixes that by telling the model that each answer must stand on its own and take a different angle.

2. The Prompt Template

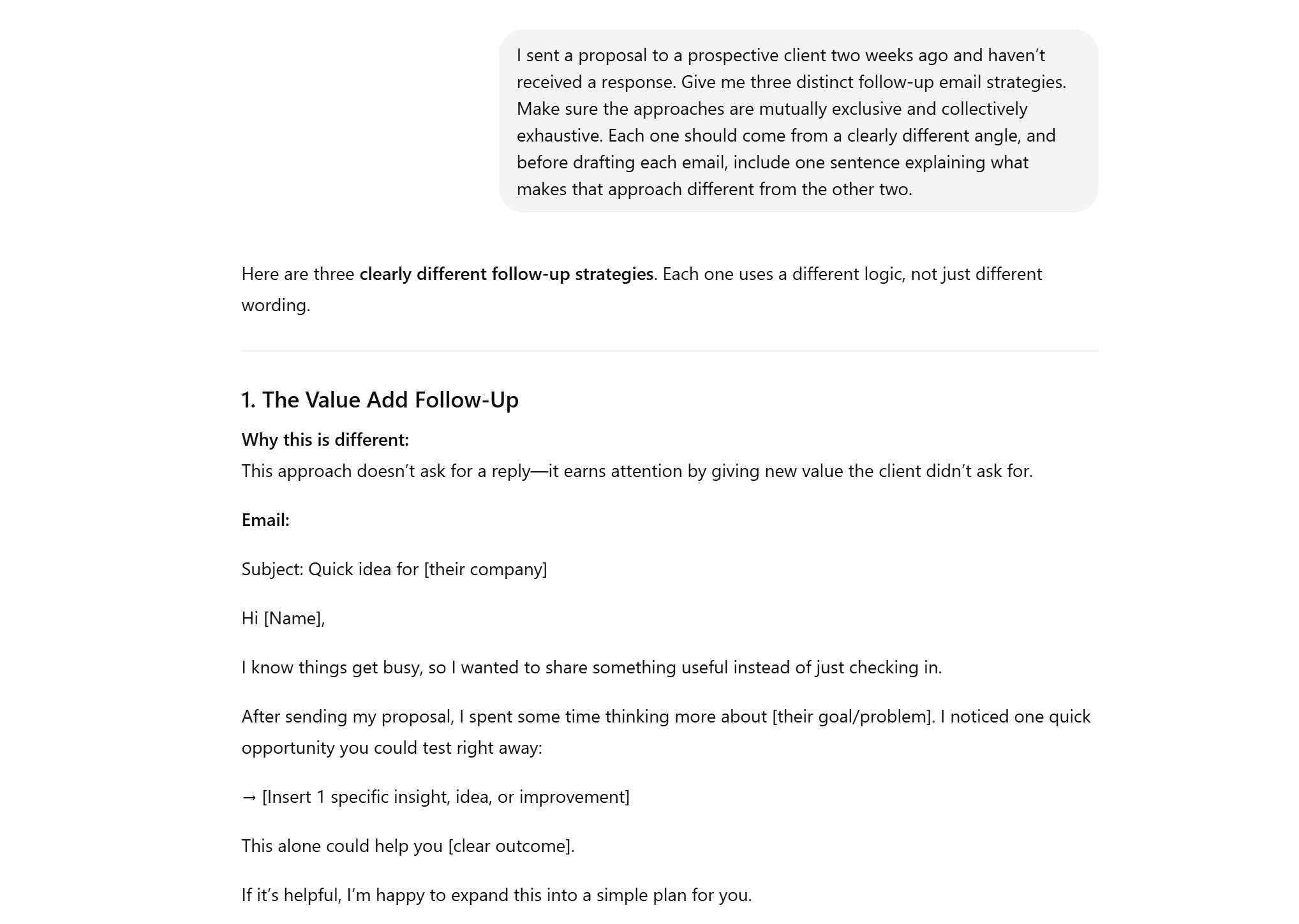

Here’s an example that shows how to apply it:

I sent a proposal to a prospective client two weeks ago and haven’t received a response. Give me three distinct follow-up email strategies. Make sure the approaches are mutually exclusive and collectively exhaustive. Each one should come from a clearly different angle and before drafting each email, include one sentence explaining what makes that approach different from the other two.The one-sentence explanation before each option forces the model to think about structure first. If it can’t explain the difference, it has to go back and rethink the option, which is exactly the point.

3. Where to Apply It

MECE works across almost any task requiring distinct options:

-

Research angles on a topic

-

Strategic approaches to a business problem

-

Data analysis frameworks

-

Writing proposals or content campaigns

-

Solutions to a team or operational challenge

Whenever you want variety without repetition, this constraint makes the output sharper and more useful.

IV. Method 2: Persona Rotation

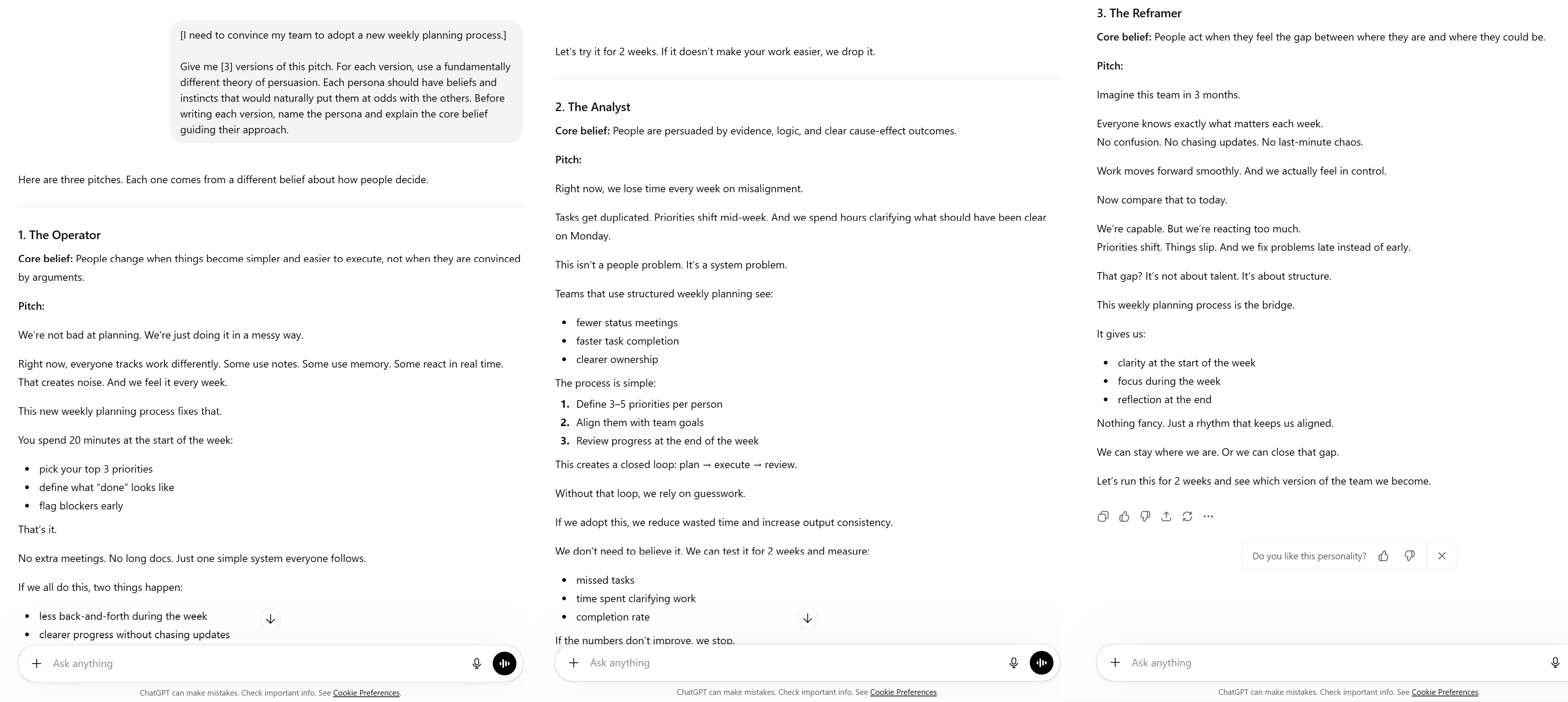

Instead of asking for “different versions,” you assign the AI clear personas with different ways of thinking. Not just different tones but different beliefs about how problems should be solved.

That conflict is what creates real diversity. So, if personas only vary in style, the outputs stay similar. But if their beliefs conflict, the outputs naturally diverge.

1. A Real-World Example

Imagine you’re a manager rolling out a new weekly planning process. You don’t need 3 similar pitches. You need 3 approaches that feel completely different.

Here are 3 personas with conflicting views on persuasion:

|

Persona |

Core Belief |

Approach |

|---|---|---|

|

The Minimalist |

One compelling truth beats many arguments |

Strip it down to the single most important point and trust that it lands |

|

The Analyst |

People are persuaded by data, not emotion |

Eliminate every possible doubt with numbers, research and logical argument |

|

The Reframer |

People act when they feel the gap between now and what’s possible |

Show them where they are, where they could be and make them feel the distance |

Now these personas would argue with each other.

-

The Minimalist thinks the Analyst is overwhelming.

-

The Analyst thinks the Reframer is too emotional.

-

The Reframer thinks the Minimalist is too simplistic.

But that tension is exactly what you want. It forces the outputs to be fundamentally different.

2. The Prompt Template

You can structure your prompt like this:

[I need to convince my team to adopt a new weekly planning process.]

Give me [3] versions of this pitch. For each version, use a fundamentally different theory of persuasion. Each persona should have beliefs and instincts that would naturally put them at odds with the others. Before writing each version, name the persona and explain the core belief guiding their approach.The reason you ask for the persona name and core belief upfront is simple: it gives you a fast way to verify whether the options are truly distinct.

3. Where to Apply It

Use persona rotation when you need real variation, not surface-level edits, like:

-

Persuasion and pitch preparation.

-

Anticipating objections from different stakeholder types.

-

Generating strategic options from competing business philosophies: growth-focused versus risk-averse versus efficiency-driven.

-

Exploring research topics from competing academic or ideological angles.

-

Creative briefs where you need genuinely different creative territories.

V. Method 3: Dimension Locking

Instead of asking AI to generate completely different versions, you ask it to change only one specific element at a time while keeping everything else the same. This gives you clean comparisons without confusion.

It’s most useful when you already have a strong base and want to see what actually makes a difference.

1. A Real-World Example

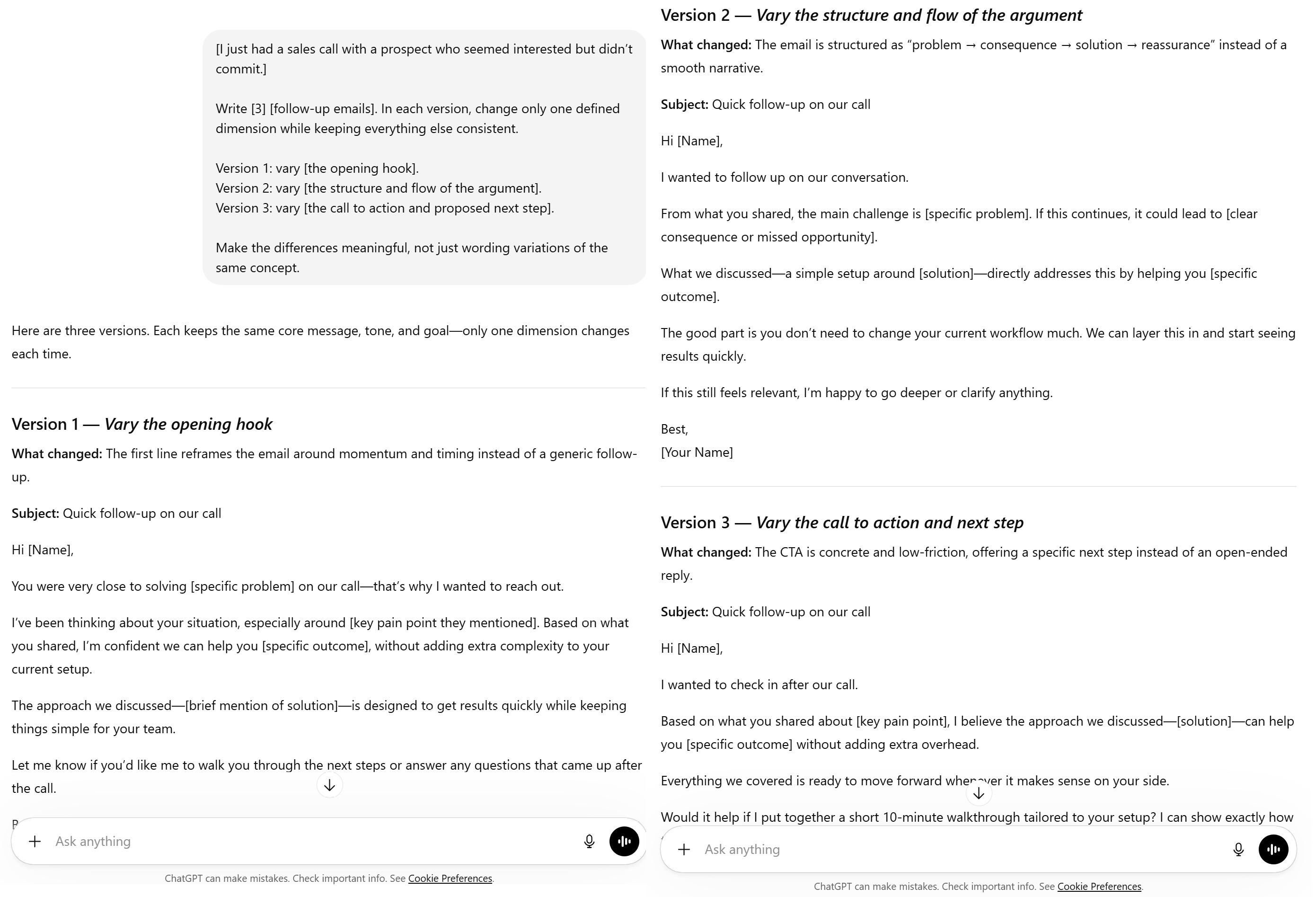

Let’s take an example to make this clear: You just had a sales call with a prospect who seemed interested but didn’t commit.

You want to send a follow-up email but you want to test three structural variations, one element changed at a time:

|

Version |

What Changes |

What Stays the Same |

|---|---|---|

|

Version 1 |

Opening hook only |

Argument flow, body content, CTA |

|

Version 2 |

Argument flow (overall email structure) |

Hook, body content, CTA |

|

Version 3 |

Call to action only |

Hook, argument flow, body |

2. The Prompt Template

To do this, you give a very clear prompt. Otherwise, AI will just rephrase the same idea. You can use something like this:

[I just had a sales call with a prospect who seemed interested but didn’t commit.]

Write [3] [follow-up emails]. In each version, change only one defined dimension while keeping everything else consistent.

Version 1: vary [the opening hook].

Version 2: vary [the structure and flow of the argument].

Version 3: vary [the call to action and proposed next step].

Make the differences meaningful, not just wording variations of the same concept.

3. Where to Apply It

You can use this method anywhere you need clarity, such as:

-

Comparing different opening strategies for emails, proposals or presentations.

-

Testing different CTA approaches on the same underlying offer.

-

Understanding the isolated impact of structural changes in reports or analyses.

-

A/B concept development for marketing materials.

-

Iterating on creative work where the base is strong but specific elements need refinement.

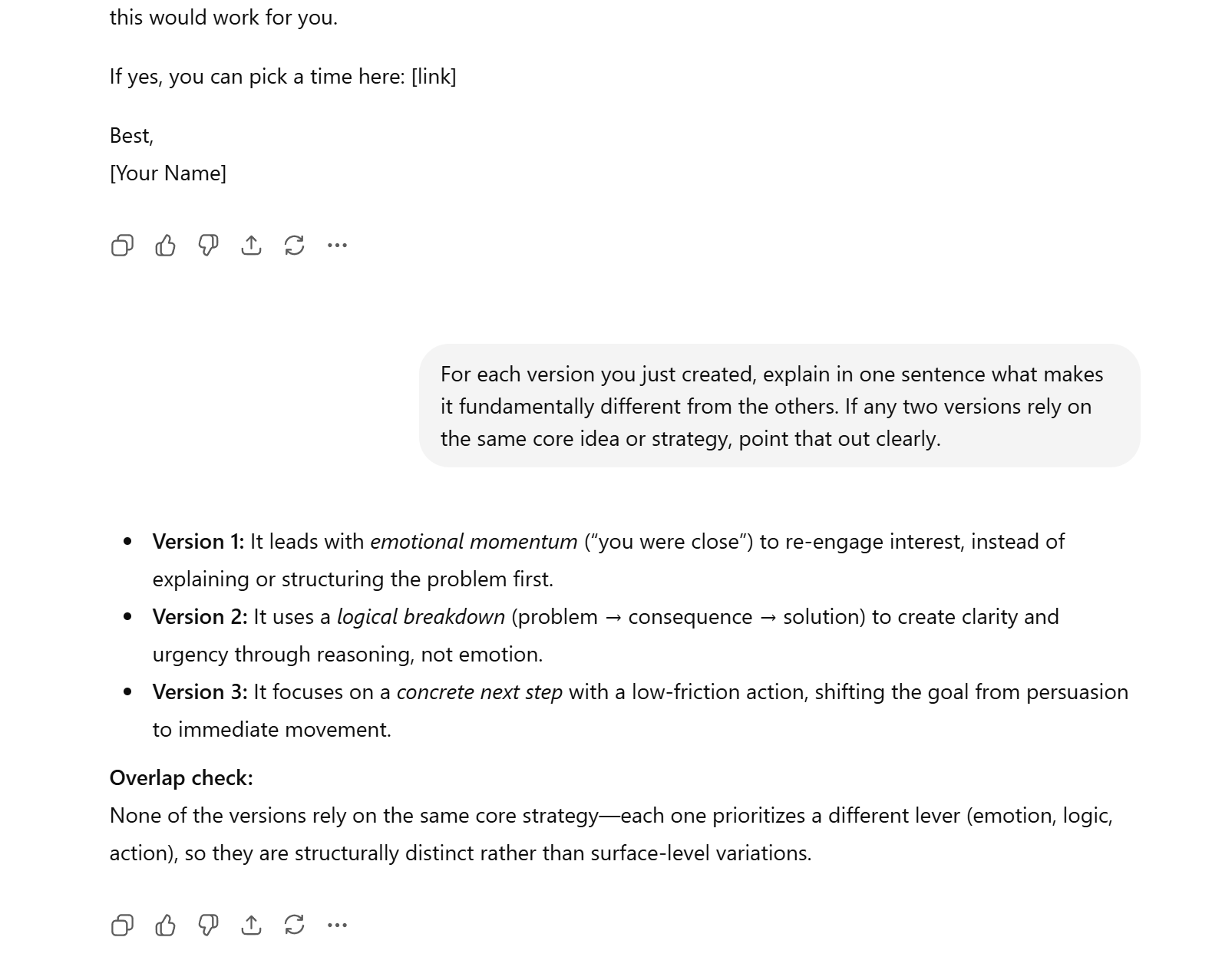

VI. Bonus 1: The Verification Test

After running any of the 3 methods above, add this follow-up prompt to make the AI check its own work:

For each version you just created, explain in one sentence what makes it fundamentally different from the others. If any two versions rely on the same core idea or strategy, point that out clearly.This simple check does a lot:

-

First, it gives you a quick signal. If those one-line explanations sound similar, then the outputs are similar, no matter how different they look on the surface.

-

Second, it forces the AI to judge its own work. Instead of blindly trusting polished text, you make the model prove that it actually created real variation. If it didn’t, you’ll see it immediately.

The best part is that it takes less than a minute and it is a copy-paste addition to any generation session. That small step can save you from building an entire plan on 3 versions of the same idea.

If prompts alone still don’t create enough separation, the next step is not better wording. It’s isolation.

Creating quality AI content takes serious research time ☕️ Your coffee fund helps me read whitepapers, test new tools and interview experts so you get the real story. Skip the fluff – get insights that help you understand what’s actually happening in AI. Support quality over quantity here!

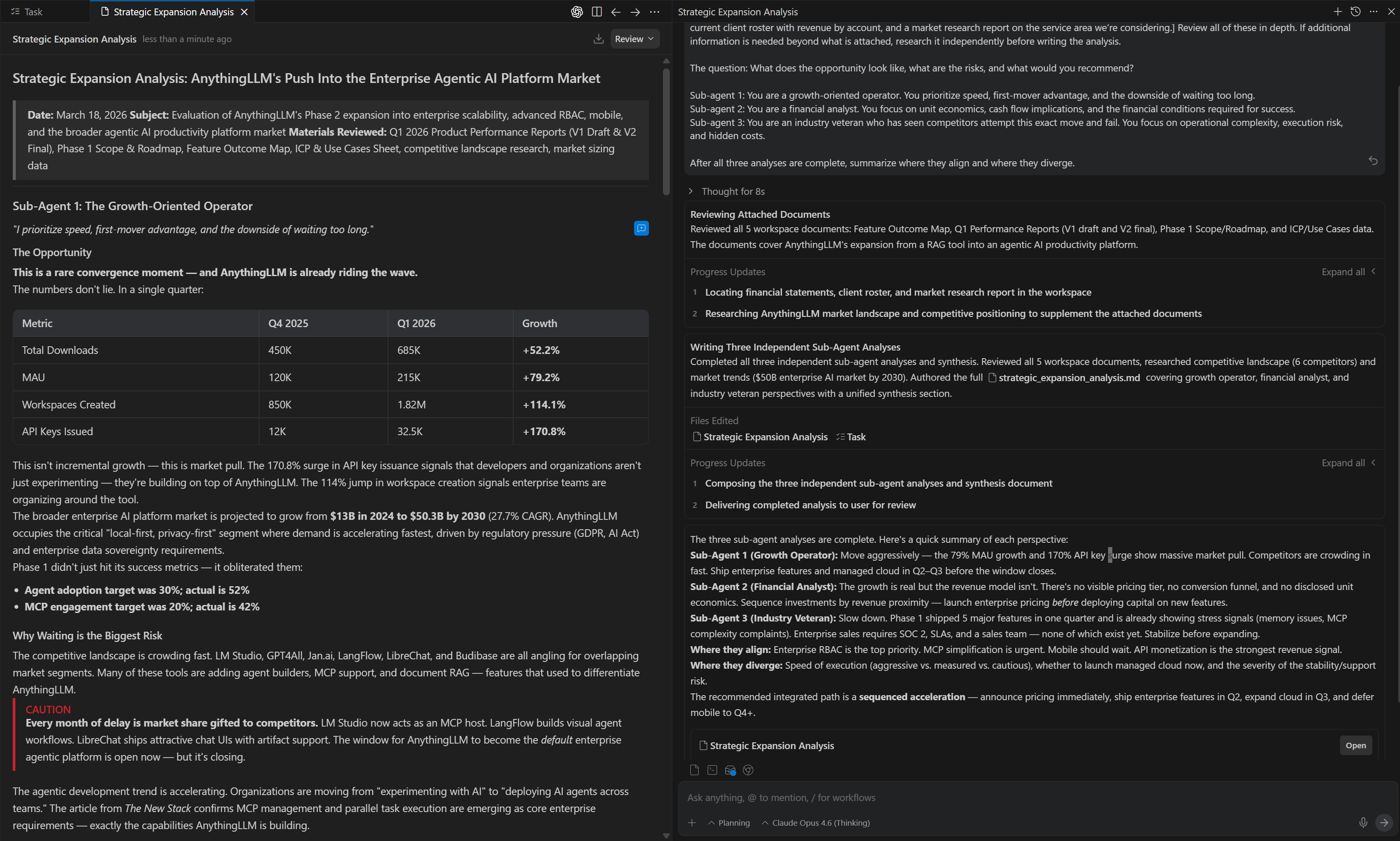

VII. Bonus 2: Sub-Agents: The Nuclear Option

There’s a hidden problem most people miss when they ask one AI to generate 3 options in the same chat: the model sees the options it already produced.

That means Option 2 is influenced by Option 1 and Option 3 is shaped by both. The outputs may look different on the surface but they still pull toward each other.

You can reduce that with better prompting but if you want truly independent answers, you have to remove the shared context completely.

That’s where sub-agents come in.

1. What Sub-Agents Do

A sub-agent is basically a separate AI instance created by a parent AI. Each sub-agent has its own isolated context window (its own separate “brain”) with no awareness of what the other sub-agents are doing or have already produced.

The parent AI gives each sub-agent a specific angle, persona or analytical lens. Each one works alone and reaches its own conclusion. After that, the parent reviews all three results and summarizes where they agree and where they differ.

2. The Prompt Template

You can use a structure like this:

I want three sub-agents to independently evaluate the question below. Each sub-agent must work in complete isolation and should not have access to the others’ analysis.

Here is the context all three sub-agents must review before forming their perspective. [I have attached our financial statements from the last two years, our current client roster with revenue by account and a market research report on the service area we’re considering.] Review all of these in depth. If additional information is needed beyond what is attached, research it independently before writing the analysis.

The question: [Should we expand into [specific service line]? What does the opportunity look like, what are the risks and what would you recommend?]

Sub-agent 1: You are a growth-oriented operator. You prioritize speed, first-mover advantage and the downside of waiting too long.

Sub-agent 2: You are a financial analyst. You focus on unit economics, cash flow implications and the financial conditions required for success.

Sub-agent 3: You are an industry veteran who has seen competitors attempt this exact move and fail. You focus on operational complexity, execution risk and hidden costs.

After all three analyses are complete, summarize where they align and where they diverge.

I tested this prompt in Antigravity.

3. Two Ways to Run It

There are really 2 ways to run this.

|

Approach |

How It Works |

Best For |

|---|---|---|

|

You define the angles |

Explicitly assign each sub-agent its worldview or role |

When you know exactly what perspectives you need |

|

Parent AI defines the angles |

Remove the role descriptions; let the orchestrator decide |

When you want AI to identify the most important analytical lenses itself |

4. What to Keep in Mind

The big thing to remember is that context matters even more here than usual. Because each sub-agent works in isolation, it can only reason from what you give it.

So, if your documents are thin, the output will be thin. If your context is rich (financials, research, constraints, customer details, operating realities), the analysis gets much stronger.

Tool requirement: Sub-agents currently work best in Claude Code and Claude Co-work but you can still try them in Codex or other local agentic tools. Also, they are not available in standard ChatGPT, Claude or Gemini chat interfaces because those environments do not support separate context windows for each agent.

VIII. Full Sub-Agents Framework at a Glance

This framework helps you stop getting repeated answers that just look different on the surface. Each method solves a specific problem.

|

Method |

Core Mechanism |

Best For |

Key Phrase |

|---|---|---|---|

|

MECE Constraint |

Forces no overlap, full coverage of solution space |

Any task requiring distinct options |

“Mutually exclusive, collectively exhaustive” |

|

Persona Rotation |

Assigns conflicting worldviews to ensure structural divergence |

Persuasion, strategy, creative territories |

“Each persona should disagree with the others” |

|

Dimension Locking |

Changes one element per version, locks everything else |

Iterative refinement, A/B development |

“Change only [X], keep everything else the same” |

|

Verification Test |

Self-grading follow-up that surfaces hidden overlap |

Use after any of the three methods above |

“If two versions share the same idea, tell me” |

|

Sub-Agents |

Isolated context windows, zero gravitational pull |

High-stakes analysis requiring truly independent perspectives |

“Create [N] sub-agents to independently analyze” |

I put all 5 copy-paste prompt templates into one document so you can use them immediately.

IX. Conclusion

The next time you ask ChatGPT or Claude for multiple options and they all feel like variations of the same thing, you know that’s not a coincidence. The good news is that the fix is simple:

-

Pick one method that fits your situation.

-

Add the MECE constraint for anything requiring truly distinct approaches.

-

Use persona rotation when you need genuinely different angles on persuasion or strategy.

-

Lock dimensions when you’re iterating on something solid and want surgical control.

-

And always run the verification test; it takes 30 seconds and catches more overlap than you’d expect.

For important decisions, sub-agents are the cleanest solution available right now.

If you are interested in other topics and how AI is transforming different aspects of our lives or even in making money using AI with more detailed, step-by-step guidance, you can find our other articles here:

-

Only 7 Prompt Rules You Need to Master Any AI Video Tool (Veo, Sora, Even 2026 ones)

-

100% Free AI Prompt Expander Triggers Your Best Prompts & 10X Your Results Instantly!*

-

Prompt Engineering Is Dead. The New Skill Is Context Engineering

-

One JSON Trick with Full Prompts to Edit ANY AI Image Without Regenerating It*

*indicates a premium content, if any

Leave a Reply